Uncaught Typeerror: I Is Not a Function Google Login

Troubleshooting Cloud Functions

This certificate shows you some of the common problems you might run across and how to deal with them.

Deployment

The deployment stage is a frequent source of problems. Many of the issues yous might encounter during deployment are related to roles and permissions. Others have to practise with wrong configuration.

User with Viewer role cannot deploy a part

A user who has been assigned the Projection Viewer or Cloud Functions Viewer role has read-simply access to functions and part details. These roles are non allowed to deploy new functions.

The error bulletin

Cloud Console

You need permissions for this action. Required permission(s): cloudfunctions.functions.create Deject SDK

ERROR: (gcloud.functions.deploy) PERMISSION_DENIED: Permission 'cloudfunctions.functions.sourceCodeSet' denied on resource 'projects/<PROJECT_ID>/locations/<LOCATION>` (or resource may not exist) The solution

Assign the user a role that has the appropriate access.

User with Project Viewer or Cloud Part role cannot deploy a part

In lodge to deploy a function, a user who has been assigned the Project Viewer, the Cloud Role Developer, or Deject Function Admin role must be assigned an additional part.

The error message

Cloud Console

User does not accept the iam.serviceAccounts.actAs permission on <PROJECT_ID>@appspot.gserviceaccount.com required to create function. Y'all can fix this by running 'gcloud iam service-accounts add-iam-policy-binding <PROJECT_ID>@appspot.gserviceaccount.com --member=user: --role=roles/iam.serviceAccountUser' Cloud SDK

Fault: (gcloud.functions.deploy) ResponseError: condition=[403], lawmaking=[Forbidden], message=[Missing necessary permission iam.serviceAccounts.actAs for <USER> on the service account <PROJECT_ID>@appspot.gserviceaccount.com. Ensure that service business relationship <PROJECT_ID>@appspot.gserviceaccount.com is a fellow member of the project <PROJECT_ID>, and then grant <USER> the role 'roles/iam.serviceAccountUser'. You tin can exercise that by running 'gcloud iam service-accounts add-iam-policy-binding <PROJECT_ID>@appspot.gserviceaccount.com --fellow member=<USER> --role=roles/iam.serviceAccountUser' In case the member is a service account delight utilise the prefix 'serviceAccount:' instead of 'user:'.] The solution

Assign the user an boosted role, the Service Business relationship User IAM office (roles/iam.serviceAccountUser), scoped to the Cloud Functions runtime service account.

Deployment service account missing the Service Agent role when deploying functions

The Cloud Functions service uses the Cloud Functions Service Agent service account (service-<PROJECT_NUMBER>@gcf-admin-robot.iam.gserviceaccount.com) when performing authoritative deportment on your projection. By default this account is assigned the Deject Functions cloudfunctions.serviceAgent role. This role is required for Cloud Pub/Sub, IAM, Cloud Storage and Firebase integrations. If you accept changed the function for this service account, deployment fails.

The fault bulletin

Cloud Console

Missing necessary permission resourcemanager.projects.getIamPolicy for serviceAccount:service-<PROJECT_NUMBER>@gcf-admin-robot.iam.gserviceaccount.com on project <PROJECT_ID>. Please grant serviceAccount:service-<PROJECT_NUMBER>@gcf-admin-robot.iam.gserviceaccount.com the roles/cloudfunctions.serviceAgent office. You can practise that by running 'gcloud projects add-iam-policy-binding <PROJECT_ID> --member=serviceAccount:service-<PROJECT_NUMBER>@gcf-admin-robot.iam.gserviceaccount.com --part=roles/cloudfunctions.serviceAgent' Cloud SDK

Fault: (gcloud.functions.deploy) OperationError: lawmaking=vii, message=Missing necessary permission resourcemanager.projects.getIamPolicy for serviceAccount:service-<PROJECT_NUMBER>@gcf-admin-robot.iam.gserviceaccount.com on projection <PROJECT_ID>. Please grant serviceAccount:service-<PROJECT_NUMBER>@gcf-admin-robot.iam.gserviceaccount.com the roles/cloudfunctions.serviceAgent role. You tin do that past running 'gcloud projects add together-iam-policy-bounden <PROJECT_ID> --member=serviceAccount:service-<PROJECT_NUMBER>@gcf-admin-robot.iam.gserviceaccount.com --role=roles/cloudfunctions.serviceAgent' The solution

Reset this service account to the default role.

Deployment service account missing Pub/Sub permissions when deploying an event-driven function

The Cloud Functions service uses the Cloud Functions Service Amanuensis service account (service-<PROJECT_NUMBER>@gcf-admin-robot.iam.gserviceaccount.com) when performing administrative deportment. By default this business relationship is assigned the Cloud Functions cloudfunctions.serviceAgent role. To deploy event-driven functions, the Deject Functions service must access Cloud Pub/Sub to configure topics and subscriptions. If the role assigned to the service business relationship is changed and the appropriate permissions are not otherwise granted, the Deject Functions service cannot access Cloud Pub/Sub and the deployment fails.

The error bulletin

Cloud Panel

Failed to configure trigger PubSub projects/<PROJECT_ID>/topics/<FUNCTION_NAME> Deject SDK

ERROR: (gcloud.functions.deploy) OperationError: lawmaking=13, message=Failed to configure trigger PubSub projects/<PROJECT_ID>/topics/<FUNCTION_NAME> The solution

You can:

-

Reset this service account to the default office.

or

-

Grant the

pubsub.subscriptions.*andpubsub.topics.*permissions to your service business relationship manually.

User missing permissions for runtime service business relationship while deploying a role

In environments where multiple functions are accessing dissimilar resources, information technology is a common practice to utilize per-function identities, with named runtime service accounts rather than the default runtime service account (PROJECT_ID@appspot.gserviceaccount.com).

However, to apply a not-default runtime service account, the deployer must have the iam.serviceAccounts.actAs permission on that non-default business relationship. A user who creates a non-default runtime service account is automatically granted this permission, just other deployers must accept this permission granted by a user with the correct permissions.

The mistake message

Cloud SDK

Fault: (gcloud.functions.deploy) ResponseError: status=[400], code=[Bad Request], message=[Invalid function service account requested: <SERVICE_ACCOUNT_NAME@<PROJECT_ID>.iam.gserviceaccount.com] The solution

Assign the user the roles/iam.serviceAccountUser role on the non-default <SERVICE_ACCOUNT_NAME> runtime service account. This role includes the iam.serviceAccounts.actAs permission.

Runtime service account missing project bucket permissions while deploying a role

Cloud Functions can just be triggered by events from Cloud Storage buckets in the same Google Cloud Platform project. In addition, the Cloud Functions Service Agent service account (service-<PROJECT_NUMBER>@gcf-admin-robot.iam.gserviceaccount.com) needs a cloudfunctions.serviceAgent role on your project.

The error bulletin

Deject Console

Deployment failure: Insufficient permissions to (re)configure a trigger (permission denied for saucepan <BUCKET_ID>). Please, give owner permissions to the editor function of the bucket and attempt again. Cloud SDK

Mistake: (gcloud.functions.deploy) OperationError: code=7, message=Insufficient permissions to (re)configure a trigger (permission denied for bucket <BUCKET_ID>). Please, requite possessor permissions to the editor role of the bucket and attempt again. The solution

You tin:

-

Reset this service account to the default role.

or

-

Grant the runtime service account the

cloudfunctions.serviceAgentrole.or

-

Grant the runtime service account the

storage.buckets.{get, update}and theresourcemanager.projects.getpermissions.

User with Project Editor role cannot brand a function public

To ensure that unauthorized developers cannot alter authentication settings for function invocations, the user or service that is deploying the function must have the cloudfunctions.functions.setIamPolicy permission.

The error message

Cloud SDK

Mistake: (gcloud.functions.add together-iam-policy-bounden) ResponseError: status=[403], code=[Forbidden], message=[Permission 'cloudfunctions.functions.setIamPolicy' denied on resources 'projects/<PROJECT_ID>/locations/<LOCATION>/functions/<FUNCTION_NAME> (or resources may non exist).] The solution

You can:

-

Assign the deployer either the Project Owner or the Cloud Functions Admin office, both of which incorporate the

cloudfunctions.functions.setIamPolicypermission.or

-

Grant the permission manually by creating a custom role.

Part deployment fails due to Cloud Build not supporting VPC-SC

Cloud Functions uses Deject Build to build your source code into a runnable container. In lodge to use Cloud Functions with VPC Service Controls, you must configure an access level for the Cloud Build service account in your service perimeter.

The error message

Cloud Console

One of the below:

Mistake in the build environment OR Unable to build your function due to VPC Service Controls. The Cloud Build service account associated with this role needs an advisable admission level on the service perimeter. Please grant access to the Deject Build service account: '{PROJECT_NUMBER}@cloudbuild.gserviceaccount.com' by following the instructions at https://cloud.google.com/functions/docs/securing/using-vpc-service-controls#grant-build-admission" Cloud SDK

I of the below:

ERROR: (gcloud.functions.deploy) OperationError: lawmaking=xiii, message=Error in the build surroundings OR Unable to build your role due to VPC Service Controls. The Cloud Build service account associated with this function needs an advisable access level on the service perimeter. Please grant admission to the Cloud Build service business relationship: '{PROJECT_NUMBER}@cloudbuild.gserviceaccount.com' by following the instructions at https://cloud.google.com/functions/docs/securing/using-vpc-service-controls#grant-build-access" The solution

If your project's Audited Resources logs mention "Asking is prohibited past organization's policy" in the VPC Service Controls section and have a Cloud Storage label, you need to grant the Deject Build Service Account admission to the VPC Service Controls perimeter.

Function deployment fails due to IPv6 addresses not permitted in VPC-SC

Cloud Functions can use IPv6 addresses for outbound requests to Cloud Storage. If y'all employ VPC Service Controls and IPv6 addresses are not permitted in your service perimeter, this can crusade failures with function deployment or execution. In gild to use VPC Service Controls with Deject Functions and IPv6 addresses, you must configure an access level to let IPv6 addresses in your service perimeter.

The error message

In Audited Resource logs, an entry similar the following:

"protoPayload": { "condition": "message": "PERMISSION_DENIED", "details": [ { "@type": "type.googleapis.com/google.rpc.PreconditionFailure", "violations": [ { "type": "VPC_SERVICE_CONTROLS", ... "requestMetadata": { "callerIp": "IPv6_ADDRESS", ... "serviceName": "storage.googleapis.com", "methodName": "google.storage.buckets.get", "metadata": { "@type": "type.googleapis.com/google.cloud.audit.VpcServiceControlAuditMetadata", "violationReason": "NO_MATCHING_ACCESS_LEVEL", ... The solution

To specifically let requests from Cloud Functions and not the unabridged Internet, permit the range 2600:1900::/28 to access your VPC-SC perimeter by configuring an access level for this range.

Function deployment fails due to incorrectly specified entry bespeak

Cloud Functions deployment tin fail if the entry point to your lawmaking, that is, the exported function proper name, is not specified correctly.

The error message

Cloud Panel

Deployment failure: Function failed on loading user code. Mistake bulletin: Error: please examine your part logs to see the error cause: https://deject.google.com/functions/docs/monitoring/logging#viewing_logs Cloud SDK

ERROR: (gcloud.functions.deploy) OperationError: lawmaking=3, message=Function failed on loading user lawmaking. Fault message: Please examine your function logs to see the mistake cause: https://cloud.google.com/functions/docs/monitoring/logging#viewing_logs The solution

Your source code must contain an entry signal function that has been correctly specified in your deployment, either via Cloud Panel or Cloud SDK.

Function deployment fails when using Resource Location Constraint organization policy

If your arrangement uses a Resources Location Constraint policy, y'all may see this mistake in your logs. Information technology indicates that the deployment pipeline failed to create a multi-regional storage bucket.

The error message

In Cloud Build logs:

Token commutation failed for projection '<PROJECT_ID>'. Org Policy Violated: '<REGION>' violates constraint 'constraints/gcp.resourceLocations' In Cloud Storage logs:

<REGION>.artifacts.<PROJECT_ID>.appspot.com` storage bucket could non be created. The solution

If you are using constraints/gcp.resourceLocations in your organization policy constraints, you should specify the appropriate multi-region location. For case, if you are deploying in whatsoever of the us regions, you should utilize us-locations.

Even so, if you crave more than fine grained command and want to restrict office deployment to a single region (not multiple regions), create the multi-region bucket beginning:

- Allow the whole multi-region

- Deploy a examination role

- After the deployment has succeeded, change the organizational policy back to permit only the specific region.

The multi-region storage bucket stays available for that region, and so that subsequent deployments can succeed. If you lot later on decide to allowlist a region outside of the one where the multi-region storage bucket was created, you must repeat the process.

Part deployment fails while executing function's global scope

This error indicates that there was a problem with your code. The deployment pipeline finished deploying the role, but failed at the terminal step - sending a health check to the role. This health check is meant to execute a function's global telescopic, which could be throwing an exception, crashing, or timing out. The global scope is where yous unremarkably load in libraries and initialize clients.

The error message

In Deject Logging logs:

"Office failed on loading user code. This is likely due to a bug in the user lawmaking." The solution

For a more detailed error message, look into your function's build logs, too as your function'south runtime logs. If information technology is unclear why your function failed to execute its global scope, consider temporarily moving the code into the request invocation, using lazy initialization of the global variables. This allows you lot to add extra log statements effectually your client libraries, which could be timing out on their instantiation (specially if they are calling other services), or crashing/throwing exceptions altogether.

Build

When you lot deploy your function's source code to Cloud Functions, that source is stored in a Cloud Storage bucket. Cloud Build and then automatically builds your code into a container image and pushes that image to Container Registry. Cloud Functions accesses this image when information technology needs to run the container to execute your function.

Build failed due to missing Container Registry Images

Cloud Functions uses Container Registry to manage images of the functions. Container Registry uses Cloud Storage to store the layers of the images in buckets named STORAGE-REGION.artifacts.Project-ID.appspot.com. Using Object Lifecycle Management on these buckets breaks the deployment of the functions as the deployments depend on these images being nowadays.

The fault bulletin

Cloud Console

Build failed: Build mistake details not available. Please bank check the logs at <CLOUD_CONSOLE_LINK> CLOUD_CONSOLE_LINK contains an fault similar beneath : failed to get OS from config file for image 'us.gcr.io/<PROJECT_ID>/gcf/the states-central1/<UUID>/worker:latest'" Deject SDK

ERROR: (gcloud.functions.deploy) OperationError: code=13, message=Build failed: Build mistake details not bachelor. Delight check the logs at <CLOUD_CONSOLE_LINK> CLOUD_CONSOLE_LINK contains an mistake similar below : failed to get Bone from config file for image 'us.gcr.io/<PROJECT_ID>/gcf/us-central1/<UUID>/worker:latest'" The solution

- Disable Lifecycle Management on the buckets required past Container Registry.

- Delete all the images of afflicted functions. You can access build logs to notice the image paths. Reference script to bulk delete the images. Annotation that this does not affect the functions that are currently deployed.

- Redeploy the functions.

Serving

The serving phase can also be a source of errors.

Serving permission error due to the role being individual

Cloud Functions allows you to declare functions private, that is, to restrict access to stop users and service accounts with the appropriate permission. By default deployed functions are set every bit individual. This error message indicates that the caller does not have permission to invoke the office.

The error bulletin

HTTP Error Response code: 403 Forbidden

HTTP Error Response body: Error: Forbidden Your client does not have permission to get URL /<FUNCTION_NAME> from this server.

The solution

Yous can:

-

Allow public (unauthenticated) admission to all users for the specific function.

or

-

Assign the user the Cloud Functions Invoker Cloud IAM role for all functions.

Serving permission error due to "allow internal traffic only" configuration

Ingress settings restrict whether an HTTP part tin be invoked by resources exterior of your Google Deject project or VPC Service Controls service perimeter. When the "allow internal traffic just" setting for ingress networking is configured, this error bulletin indicates that only requests from VPC networks in the aforementioned project or VPC Service Controls perimeter are allowed.

The mistake bulletin

HTTP Mistake Response code: 403 Forbidden

HTTP Mistake Response body: Error 403 (Forbidden) 403. That's an mistake. Access is forbidden. That'due south all we know.

The solution

Y'all can:

-

Ensure that the asking is coming from your Google Cloud project or VPC Service Controls service perimeter.

or

-

Change the ingress settings to permit all traffic for the part.

Function invocation lacks valid authentication credentials

Invoking a Cloud Functions role that has been prepare with restricted access requires an ID token. Admission tokens or refresh tokens practise not work.

The fault message

HTTP Error Response code: 401 Unauthorized

HTTP Fault Response body: Your client does not have permission to the requested URL

The solution

Make sure that your requests include an Say-so: Bearer ID_TOKEN header, and that the token is an ID token, not an admission or refresh token. If you are generating this token manually with a service account's private key, you must exchange the self-signed JWT token for a Google-signed Identity token, post-obit this guide.

Attempt to invoke function using roll redirects to Google login page

If you attempt to invoke a function that does non exist, Deject Functions responds with an HTTP/two 302 redirect which takes yous to the Google account login page. This is incorrect. It should respond with an HTTP/2 404 fault response code. The trouble is being addressed.

The solution

Make sure you specify the name of your function correctly. You tin e'er check using gcloud functions telephone call which returns the right 404 mistake for a missing function.

Application crashes and part execution fails

This error indicates that the procedure running your function has died. This is normally due to the runtime crashing due to problems in the office code. This may besides happen when a deadlock or some other status in your function's lawmaking causes the runtime to go unresponsive to incoming requests.

The mistake message

In Cloud Logging logs: "Infrastructure cannot communicate with function. There was probable a crash or deadlock in the user-provided code."

The solution

Unlike runtimes can crash under different scenarios. To find the root cause, output detailed debug level logs, check your application logic, and test for edge cases.

The Deject Functions Python37 runtime currently has a known limitation on the rate that it can handle logging. If log statements from a Python37 runtime case are written at a sufficiently loftier rate, information technology can produce this error. Python runtime versions >= three.8 practice not accept this limitation. We encourage users to migrate to a higher version of the Python runtime to avoid this event.

If you are yet uncertain near the cause of the error, check out our support page.

Function stops mid-execution, or continues running after your code finishes

Some Cloud Functions runtimes permit users to run asynchronous tasks. If your function creates such tasks, it must also explicitly wait for these tasks to complete. Failure to do so may cause your office to terminate executing at the wrong time.

The fault beliefs

Your function exhibits one of the post-obit behaviors:

- Your function terminates while asynchronous tasks are still running, merely before the specified timeout catamenia has elapsed.

- Your part does not stop running when these tasks finish, and continues to run until the timeout menses has elapsed.

The solution

If your part terminates early, you should make sure all your part's asynchronous tasks have been completed before doing any of the following:

- returning a value

- resolving or rejecting a returned

Promiseobject (Node.js functions simply) - throwing uncaught exceptions and/or errors

- sending an HTTP response

- calling a callback function

If your role fails to terminate once all asynchronous tasks take completed, yous should verify that your office is correctly signaling Cloud Functions once it has completed. In particular, make sure that you perform one of the operations listed higher up as presently every bit your office has finished its asynchronous tasks.

JavaScript heap out of memory

For Node.js 12+ functions with retentivity limits greater than 2GiB, users need to configure NODE_OPTIONS to have max_old_space_size so that the JavaScript heap limit is equivalent to the office's memory limit.

The error message

Deject Panel

FATAL Error: CALL_AND_RETRY_LAST Allocation failed - JavaScript heap out of memory The solution

Deploy your Node.js 12+ function, with NODE_OPTIONS configured to have max_old_space_size set up to your function's memory limit. For example:

gcloud functions deploy envVarMemory \ --runtime nodejs16 \ --set-env-vars NODE_OPTIONS="--max_old_space_size=8192" \ --memory 8Gi \ --trigger-http Function terminated

Yous may see ane of the following mistake messages when the procedure running your code exited either due to a runtime mistake or a deliberate go out. There is also a small gamble that a rare infrastructure mistake occurred.

The fault messages

Function invocation was interrupted. Error: function terminated. Recommended action: inspect logs for termination reason. Boosted troubleshooting data can be found in Logging.

Request rejected. Error: office terminated. Recommended action: inspect logs for termination reason. Additional troubleshooting information can exist found in Logging.

Function cannot exist initialized. Fault: office terminated. Recommended activeness: inspect logs for termination reason. Additional troubleshooting data tin can exist establish in Logging.

The solution

-

For a background (Pub/Sub triggered) office when an

executionIDis associated with the asking that ended upwardly in error, try enabling retry on failure. This allows the retrying of role execution when a retriable exception is raised. For more data for how to use this option safely, including mitigations for avoiding space retry loops and managing retriable/fatal errors differently, see Best Practices. -

Background activity (anything that happens after your function has terminated) can cause problems, so check your code. Cloud Functions does non guarantee any actions other than those that run during the execution menstruation of the role, then even if an action runs in the background, information technology might be terminated by the cleanup procedure.

-

In cases when there is a sudden traffic spike, try spreading the workload over a little more time. Also test your functions locally using the Functions Framework earlier you deploy to Cloud Functions to ensure that the mistake is not due to missing or conflicting dependencies.

Scalability

Scaling issues related to Cloud Functions infrastructure can arise in several circumstances.

The post-obit weather condition tin can exist associated with scaling failures.

- A huge sudden increase in traffic.

- A long cold start time.

- A long request processing time.

- High part mistake rate.

- Reaching the maximum instance limit and hence the organisation cannot calibration whatsoever further.

- Transient factors attributed to the Cloud Functions service.

In each case Cloud Functions might non scale up fast enough to manage the traffic.

The error message

-

The request was aborted because there was no available instance-

severity=Alarm( Response lawmaking: 429 ) Deject Functions cannot scale due to themax-instanceslimit you set during configuration. -

severity=ERROR( Response lawmaking: 500 ) Cloud Functions intrinsically cannot manage the charge per unit of traffic.

-

The solution

- For HTTP trigger-based functions, take the client implement exponential backoff and retries for requests that must not be dropped.

- For background / issue-driven functions, Cloud Functions supports

at least one time commitment. Even without explicitly enabling retry, the event is automatically re-delivered and the function execution volition be retried. See Retrying Event-Driven Functions for more information. - When the root cause of the issue is a period of heightened transient errors attributed solely to Cloud Functions or if you demand assistance with your issue, please contact support

Logging

Setting up logging to assistance you rail down issues can cause problems of its own.

Logs entries have no, or wrong, log severity levels

Deject Functions includes simple runtime logging by default. Logs written to stdout or stderr appear automatically in the Cloud Console. But these log entries, by default, contain but simple string letters.

The error message

No or wrong severity levels in logs.

The solution

To include log severities, y'all must send a structured log entry instead.

Handle or log exceptions differently in the event of a crash

Yous may desire to customize how yous manage and log crash data.

The solution

Wrap your function is a try/take hold of block to customize handling exceptions and logging stack traces.

Instance

import logging import traceback def try_catch_log(wrapped_func): def wrapper(*args, **kwargs): endeavor: response = wrapped_func(*args, **kwargs) except Exception: # Supersede new lines with spaces then as to foreclose several entries which # would trigger several errors. error_message = traceback.format_exc().replace('\n', ' ') logging.error(error_message) return 'Fault'; return response; render wrapper; #Example hullo earth function @try_catch_log def python_hello_world(asking): request_args = request.args if request_args and 'name' in request_args: 1 + 's' return 'Hello Globe!' Logs too big in Node.js 10+, Python three.8, Go 1.13, and Java 11

The max size for a regular log entry in these runtimes is 105 KiB.

The solution

Make sure you send log entries smaller than this limit.

Cloud Functions logs are not appearing in Log Explorer

Some Cloud Logging client libraries use an asynchronous process to write log entries. If a part crashes, or otherwise terminates, it is possible that some log entries accept not been written however and may appear later. It is too possible that some logs will exist lost and cannot be seen in Log Explorer.

The solution

Use the client library interface to flush buffered log entries before exiting the role or apply the library to write log entries synchronously. You can too synchronously write logs directly to stdout or stderr.

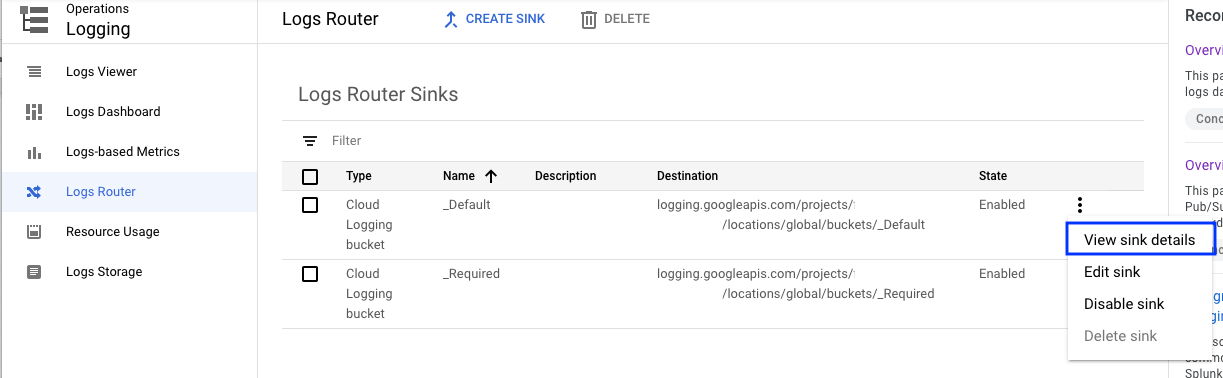

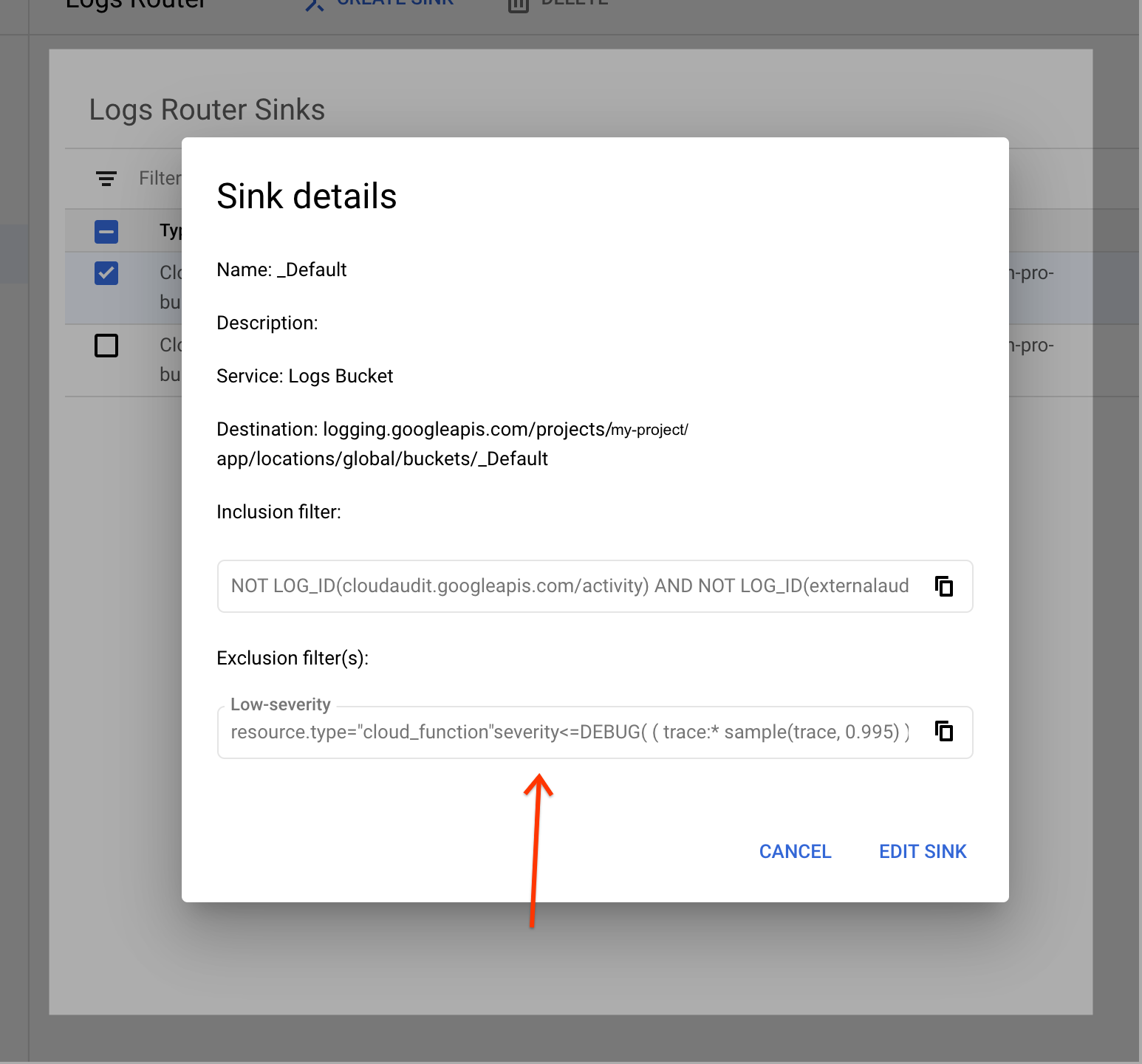

Cloud Functions logs are not actualization via Log Router Sink

Log entries are routed to their diverse destinations using Log Router Sinks.

Included in the settings are Exclusion filters, which define entries that tin can simply be discarded.

The solution

Make sure no exclusion filter is set for resource.type="cloud_functions"

Database connections

In that location are a number of bug that can arise when connecting to a database, many associated with exceeding connection limits or timing out. If yous run into a Cloud SQL warning in your logs, for case, "context deadline exceeded", you might need to accommodate your connection configuration. See the Cloud SQL docs for additional details.

Uncaught Typeerror: I Is Not a Function Google Login

DOWNLOAD HERE

Source: https://cloud.google.com/functions/docs/troubleshooting

Posted by: gerribeferal.blogspot.com